Hello,

I am using Truenas (25.04) in Proxmox with 2 Kioxia CM7-R 15 TB mirrored passed through.

Truenas has 64 GB of RAM and 8 Cores assigned to it.

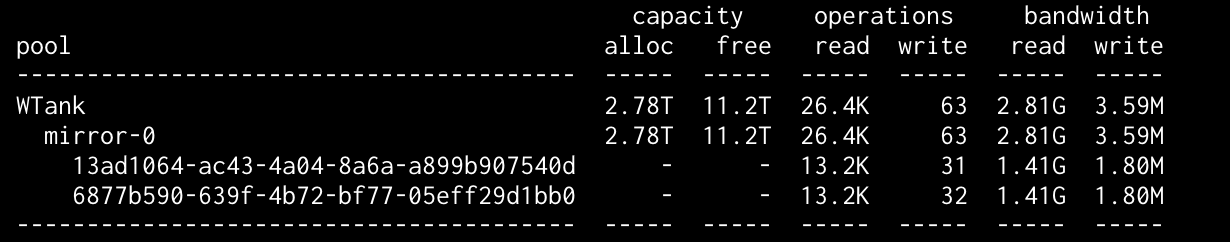

I ran - zpool iostat

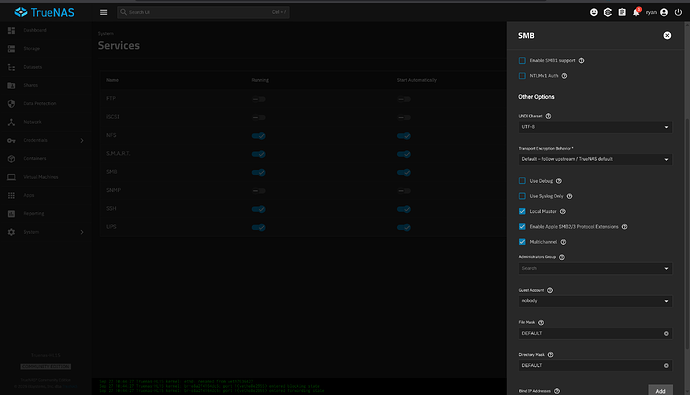

Via SMB, I’m getting at best 190 MB/s over an Intel X710 10 GbE connection (checked the connection speed with iperf it reaches 10 GbE).

I can’t understand what’s not working properly. I had the same setup before, but I had to redo the server and start everything from scratch.

The performance was fine previously—the 10 GbE link was always saturated. I just can’t figure out what’s wrong.

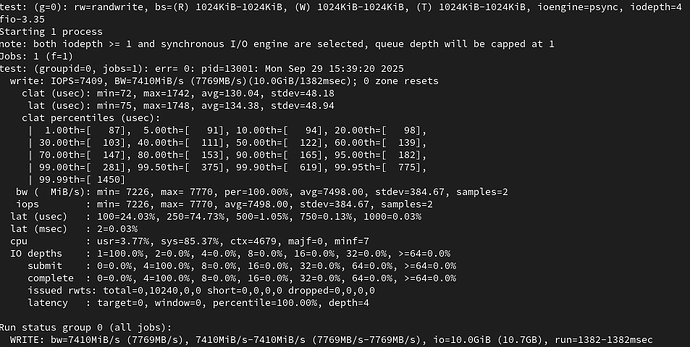

Some “fio” test, the write performance is the problem, I do not know why it is not reaching at least 3/4 GBs.

fio --filename=/mnt/WTank/test --sync=1 --rw=randwrite --bs=1M --numjobs=1 --iodepth=4 --group_reporting --name=test --filesize=10G --runtime=300 && rm /mnt/WTank/test

test: (g=0): rw=randwrite, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=psync, iodepth=4

Run status group 0 (all jobs):

WRITE: bw=640MiB/s (671MB/s), 640MiB/s-640MiB/s (671MB/s-671MB/s), io=10.0GiB (10.7GB), run=15993-15993msec

Run status group 0 (all jobs):

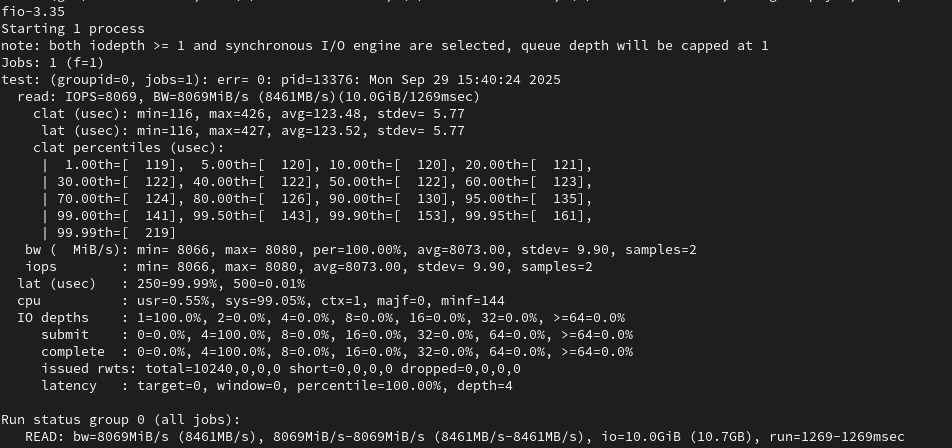

--filename=/mnt/WTank/test --sync=1 --rw=randread --bs=1M --numjobs=1 --iodepth=4 --group_reporting --name=test --filesize=10G --runtime=300 && rm /mnt/WTank/test

test: (g=0): rw=randread, bs=(R) 1024KiB-1024KiB, (W) 1024KiB-1024KiB, (T) 1024KiB-1024KiB, ioengine=psync, iodepth=4

READ: bw=9.99GiB/s (10.7GB/s), 9.99GiB/s-9.99GiB/s (10.7GB/s-10.7GB/s), io=10.0GiB (10.7GB), run=1001-1001msec