I have a B650 (socket AM5) motherboard with the following PCIe specs from the manual;

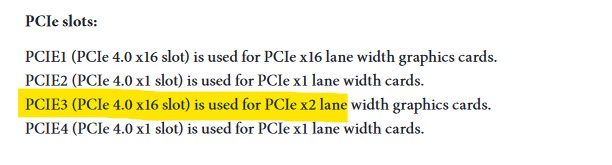

PCIEI (PC!e 4.0 xl6 slot) is used for PC!e x16 lane width graphics cards.

PCIE2 (PC!e 4.0 x1 slot) is used for PC!e x1 lane width cards.

PCIE3 (PC!e 4.0 xl6 slot) is used for PC!e x2 lane width graphics cards.

PCIE4 (PC!e 4.0 x1 slot) is used for PC!e x1 lane width cards.

and an LSI 9300-16i HBA. When I originally set this up in the HL15, I used PCIEI for the HBA as I had no plans for a GPU and a lot of ignorance about all things PCIe. The system is running TrueNAS and working fine.

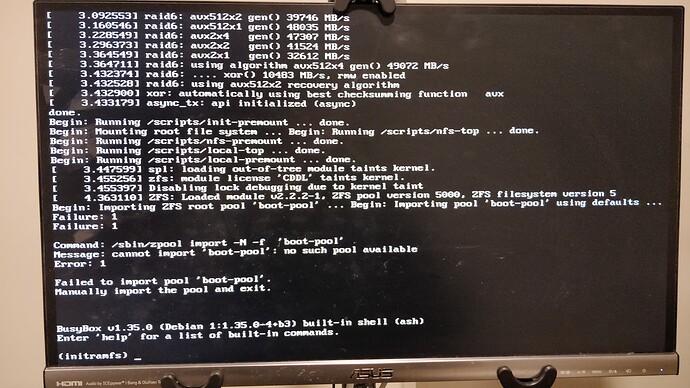

I’d like to move the HBA to PCIE3 so I can install something else in PCIEI. But, when I do this, the ZFS pool doesn’t come up in TrueNAS (degraded and only has four disks or something, not sure, slow UI disk info updates). I moved the card back to PCIE1 and all is ok again. I repeated that two or three times, so it’s not a loose cable problem.

When I put the HBA in PCIE3, the motherboard BIOS does seem to show the HBA and that it has 12 drives attached (which is the number of bays I have populated), but I’m not an expert.

This is without putting anything else in PCIEI. First, I’m just trying to move the HBA.

Should PCIE3 support the LSI 9300-16i? Do I need to reset something in the BIOS or in TrueNAS? Will it only support two of the four SFF-8643 connectors in that slot?

One thing that confuses me a bit is that these HBA cards (from whatever PCIe generation) seem to be “X8” but motherboards seem to have moved away from PCIe X8 slots and have instead dedicated all that PCIe bandwidth to m.2, USB, SATA and a single X16 slot.

Is there not a motherboard that will give me two X8 slots? It was never something I did, but I remember people putting three or four GPUs on a system.

X670 motherboards seem to provide more total PCIe lanes but the slot configurations seem about the same, so I can’t really tell if getting one of those would provide better support for the LSI 9300-16i in the non-X16 slot.