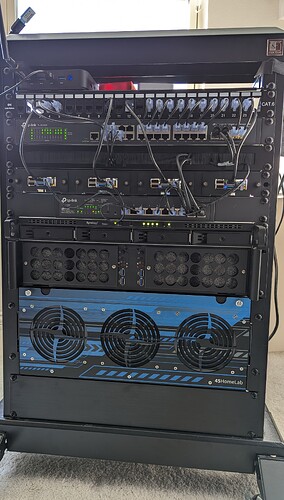

With the inclusion of the HL15, I’ve maxed out my 15U rack.

(But I see a blank 2U space what gives!)

Yeah, I know, but that’s sorta reserved, and on the backside there’s an UPS in the way.

I feel like this picture makes it look more messy than in person, that 2u dust filters should be cleaned lol.

Top to Bottom:

1U shelf, Home Assistant running on its own mini-pc, hence the zigbee and thread dongles.

1U 24 port patch panel. all the servers have cables running through this to the switch below. Some keystones are missing so i can fit m.2 to usb3 drives for the raspberry pis.

1U TPlink Omada switch (24port, no PoE)

1U brush panel, just hides some clutter,

1U 4x Raspberry Pi 4 with PoE hats. These are 4 nodes in my Kubernetes cluster.

1U TPlink Omada 8 port PoE.

1U Synology RS820+ with 4x 4TB. This will now be synced with my HL15.

2U Rackchoice 2x Mini-ITX. This holds 2 Turing Pis, with 6 CM4s. This is the other half of my Kubernetes cluster.

4U HL15, fans swapped with noctuas, intake filters on the front, over 100Tb of raw storage, 96GB Ram

2U placeholder

But wait there’s more!

So I mentioned the 2U spot was suppose to be filled, well between moving the UPS to the back, and adding the HL15, there isn’t enough room for things.

I have another 2U shelf with things that don’t exactly have a nice home.

This holds another mini-pc for my Jellyfin server. This is a Minisforum HN2673 with an Intel Arc A730M

This pc was going to be attached to the Kubes cluster, but there’s some quirkiness with JF and multiple intel devices so that is on hold.

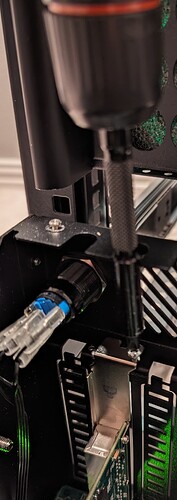

Also pictured is a framework mainboard that’s attached via thunderbolt to an RTX2070 and housing a Google Coral m.2. This is attached to my cluster, and running Frigate, Harbor, and OpenAI whisper.

The Idea is to get a case for the 2070 and framework that could fit in the 2U spot on the front. (or I might wait until I get a new rack.

Also slightly pictured in red is an ESP32 running OpenDTU for monitoring solar panels, and a Lutron hub for light switches.

Networking:

Currently this is all on 1Gb connections, but my main uplink to my router is 2 LAGed connections.

My Synology has a dedicated 10Gb link to the HL15.

Also slight annoyance on the HL-15. My LTT screwdriver is slightly too wide to screw in the PCIE slots