Hi 45Home-Labbers:

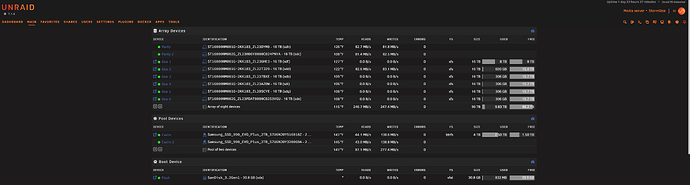

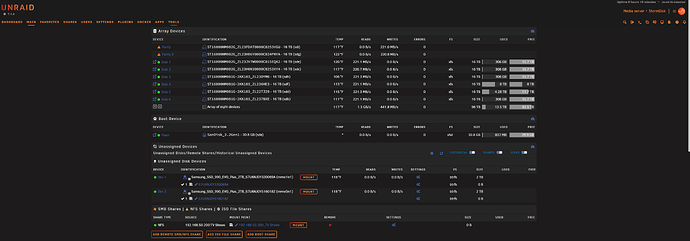

I finally got my HL-8 UnRAID setup with (8) Seagate Exos Enterprise HDDS and 2x 2TB NVMe Cache Drives. All drives passed the disk scans and parity was completed.

I have a mix of (2) 16TiB SAS, same brand, Seagate Exos and (6) 16TiB SATA drives connected to an HBA from Broadcom - LSI 9400-8i x8 lane PCI Express 3.1 SAS Tri-Mode Storage Adapter.

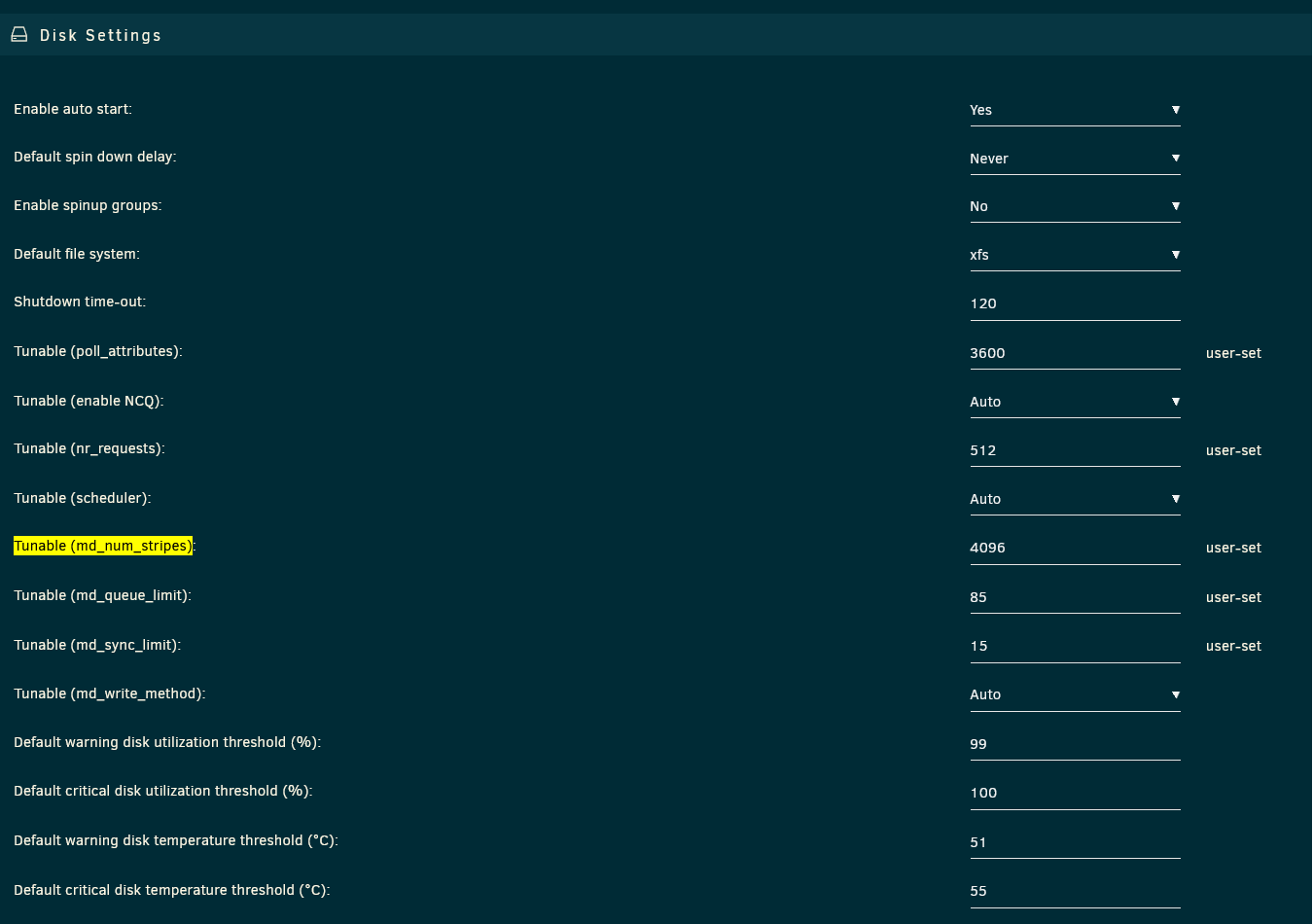

As you can see from the screen cap, I’m only getting 75-80 MB/s write performance even on the SAS drives; The ST16000NM002G_ZL23FDAT (sde) + ST16000NM002G_ZL23H06Y (sdc) are the SAS HDDs.

I thought it was the HBA and looked around the guy at ‘Art of the Server’ had a video post about updating the really old FW and BIOs. So, I did that too only via the Windows StorCLI64.exe.

QQ: Shouldn’t the write performance be better on the SAS HDDs? What kind of write performance are SAS-only users seeing in UnRAID?

_________________

StorCLI or StorCLI64.exe (Windows)

If you come upon this post, here’s the PRO tip, put the ‘Storcli64.exe’ in the root of your C:\ drive with the 3 BIOs you want to update.

Broadcom LSI 9400-8i FW Files:

mpt35sas_legacy.rom

mpt35sas_x64.rom

HBA_9400-8i_Mixed_Profile.bin

c:/storcli/storcli64 /c0 download file=HBA_9400-8i_Mixed_Profile.bin

Downloading image.Please wait…

CLI Version = 007.3503.0000.0000 Aug 05, 2025

Operating system = Windows 11

Controller = 0

Status = Success

Description = CRITICAL! Flash successful. Please power cycle the system for the changes to take effect

____________________________________________________________________

c:/storcli/storcli64 /c0 download bios file=mpt35sas_legacy.rom

Downloading image. Please wait…

CLI Version = 007.3503.0000.0000 Aug 05, 2025

Operating system = Windows 11

Controller = 0

Status = Success

Description = Bios Flash Successful

_______________________________________________________________________

c:/storcli/storcli64 /c0 download efibios file=mpt35sas_x64.rom

Downloading image.Please wait…

CLI Version = 007.3503.0000.0000 Aug 05, 2025

Operating system = Windows 11

Controller = 0

Status = Success

Description = EFI Bios Flash Successful

_______________________________________________________________________