I could not find much on whether it is better to use a HBA on a PCI-E x8 port vs some of the MB integrated HBAs like the LSI3008 directly attached to the CPU.

Supermicro’s H12SSL & H13SSL have several versions some with and some without the LSI3008.

Using TrueNAS Scale which would be the best performance the integrated HBA or the PCI-E x8 HBA like the 94500-16i 9500-16i or 9600-16i?

Also, are the 9670W-16ii or 9405W-16i, supported with TrueNAS Scale as it is PCI-E x16? And would this be the equivalent of the integrated HBA?

I am aware they will need to be all flashed to IT-Mode.

It seems to me the SuperMicro boards have better support for Bifurcation on the slots unlike the Tyan Boards. However, the M2 slots seem to cross over the slot channels unlike the Tyan Boards. I noted that several people had some difficulty using the SuperMicro boards due to the M2 NVMe cards getting in the way. Especially on full size/length PCIe boards.

Thanks

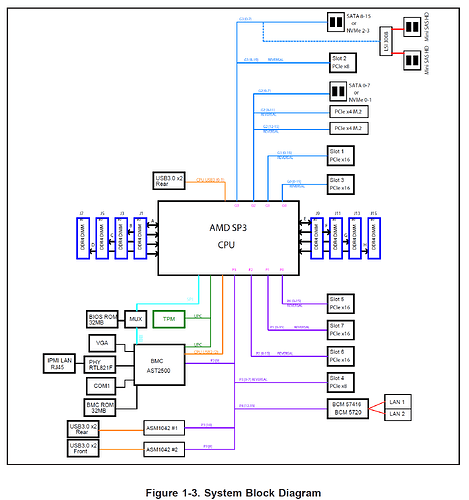

The Full build X11SPH and the H12SSL-C/CT motherboards have a single SAS3008 controller. In both cases based on the block diagrams in the manual that controller is connected to the CPU by a PCIe gen 3 x8 connection. I can’t see a version of the H13SSL with a SAS3008 controller but I didn’t look hard.

“-16i” HBAs have two SAS3008 controllers. For full throughput they want to live in an electrical x16 slot. If you put one in an electrical x8 slot it will potentially reduce the theoretical bandwidth because it has to do something equivalent to multiplexing two of the drives over each PCIe lane. Note, though, that that is moot if dealing with spinning rust, as two spinning HDDs won’t saturate a single PCIe gen 3 lane (985 MB/s).

You’d need to check the block diagram for the motherboard you are concerned with, but in general it seems like there’s no difference if talking about a single SAS3008 controller, and only a slightly lower performance from a -16i card in an electrical x8 slot if the drives connected to it are SSDs capable of sustained R/W at or above about 490 MB/s.

On the X12SSL-C/CT motherboards there is one LSI 3008 SAS. It does not appear to be connected via the PCIe-8 slot but directly to the CPU. See attached block diagram.

The 9500-16i & 9502-16i are x8 Gen 4.0 boards and use the SAS3816 and they have two SFF-8654 8-port connectors.

Gen 4.0 boards theoretical bandwidth is 16 GB/s.

The9600 -16i & 9620-16i are also x8 Gen 4.0 Gen 4.0 boards and use the SAS4016 and have two SFF-8654 8-port internal connectors.

A PCIe x16 Gen4 card like the 9670W-16i or 9405W-16i increase the bandwidth to 32 GB/s. However they are MegaRaid not HBAs so i guess that is a mute point.

My assumption is that if i use the Gen4 x8 cards in the PCIe x16 slot there will be not much difference than the integrated SAS3008 with the CPU.

The last question is would it better to get two 9600-16i cards and use two PCIe x16 slots for maximum throughput?

1 Like

The SAS3008 uses eight PCIe lanes and does so at gen 3 speed, whether it is on the motherboard or an HBA. If you have an HBA that uses PCIe gen 4 and a CPU (in your case apparently AMD Epyc Zen 2) that supports PCIe gen 4 that’s going to give you, theoretically, a much higher bandwidth through the HBA. The question is, are you connecting SAS or NVMe SSDs that are actually capable of saturating that bandwidth.

What are you actually trying to do? What media are you actually trying to connect–12 gbps SAS drives?

I am trying not to buy the minimum but for the future. So if two HBA cards would be better than one then i would like to plan for that in slots, MB, power so that as I add capacity I have the best performance.

So yes the plan would be to upgrade over time as the prices drop.

Thanks for your replies they have been very helpful